Single Layer Neural Network - Perceptron model on the Iris dataset using Heaviside step activation functionīatch gradient descent versus stochastic gradient descent Machine learning algorithms and concepts Batch gradient descent algorithm Scikit-learn : Sample of a spam comment filter using SVM - classifying a good one or a bad one Scikit-learn : Support Vector Machines (SVM) IIįlask with Embedded Machine Learning I : Serializing with pickle and DB setupįlask with Embedded Machine Learning II : Basic Flask Appįlask with Embedded Machine Learning III : Embedding Classifierįlask with Embedded Machine Learning IV : Deployįlask with Embedded Machine Learning V : Updating the classifier Scikit-learn : Support Vector Machines (SVM)

Scikit-learn : Random Decision Forests Classification Scikit-learn : Decision Tree Learning II - Constructing the Decision Tree Scikit-learn : Decision Tree Learning I - Entropy, Gini, and Information Gain Scikit-learn : Linearly Separable Data - Linear Model & (Gaussian) radial basis function kernel (RBF kernel) Scikit-learn : Unsupervised_Learning - KMeans clustering with iris dataset Unsupervised PCA dimensionality reduction with iris dataset Scikit-learn : Supervised Learning & Unsupervised Learning - e.g. Scikit-learn : Logistic Regression, Overfitting & regularization Scikit-learn : Data Compression via Dimensionality Reduction III - Nonlinear mappings via kernel principal component (KPCA) analysis Scikit-learn : Data Compression via Dimensionality Reduction II - Linear Discriminant Analysis (LDA) Scikit-learn : Data Preprocessing III - Dimensionality reduction vis Sequential feature selection / Assessing feature importance via random forestsĭata Compression via Dimensionality Reduction I - Principal component analysis (PCA) Scikit-learn : Data Preprocessing II - Partitioning a dataset / Feature scaling / Feature Selection / Regularization Scikit-learn : Data Preprocessing I - Missing / Categorical data Scikit-learn : Machine Learning Quick Preview Scikit-learn : Features and feature extraction - iris dataset

#VISUALIZE DECISION TREE PYTHON CODE#

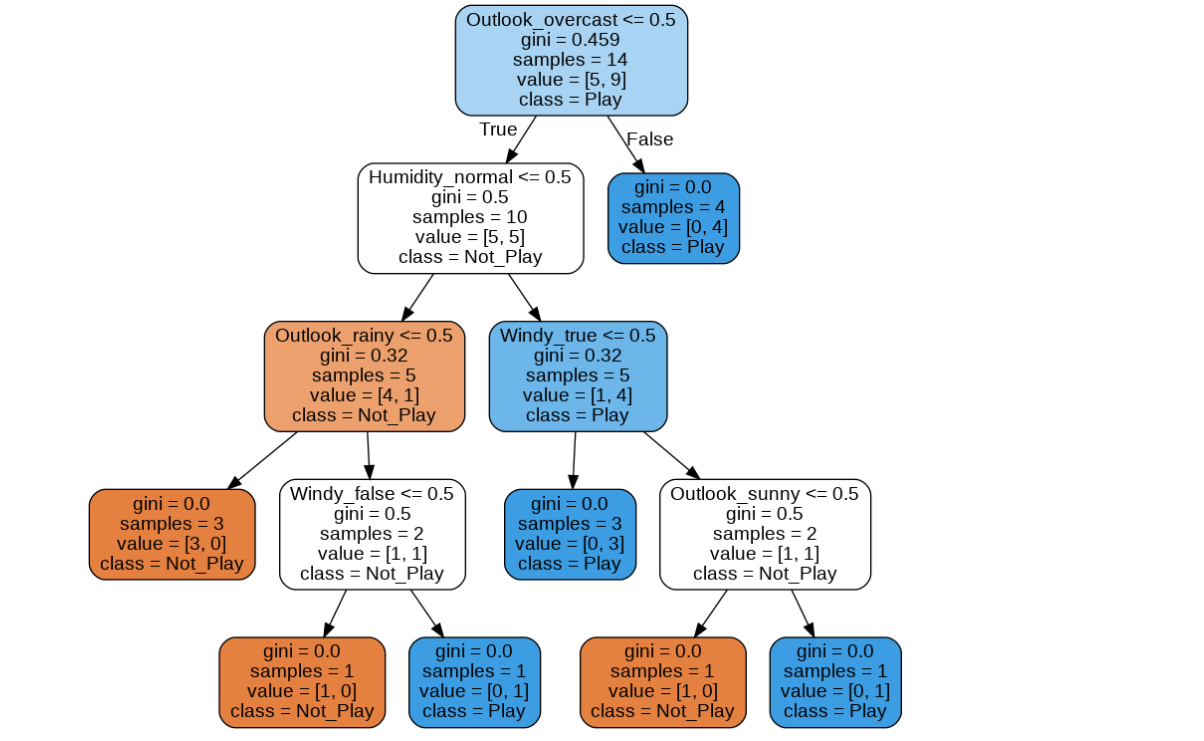

The code used for the plot is as follows: That the Gini index is an intermediate measure between entropy and the classification error. Note that we introduced a scaled version of the entropy (entropy/2) to emphasize In this section, we'll plot the three impurity criteria we discussed in the previous section: The Information Gain (IG) can be defined as follows: We will use it to decide the ordering of attributes In other words, IG tells us how important a given

Set of training feature vectors is most useful. Want to prune the tree by setting a limit for the maximum depth of the tree.īasically, using IG, we want to determine which attribute in a given However, this can result in a very deep tree with many nodes, which can easily lead to overfitting. Samples at each node all belong to the same class. We repeat this splitting procedure at each child node down to the empty leaves. Using a decision algorithm, we start at the tree root and split the data on theįeature that results in the largest information gain (IG). Similar to entropy, the Gini index is maximal if the classes are perfectly mixed, So, basically, the entropy attempts to maximize the mutual information (by constructing a equal probability node) in the decision tree. entropy of a group with 50% in either class:.

Entropy of a group in which all examples belong to the same.Entropy reaches maximum value when all classes in the node have equal probability.

In other words, theĮntropy of a node (consist of single class) is zero because the probability is 1 and log (1) = 0. The entropy is 0 if all samples of a node belong to the same class,Īnd the entropy is maximal if we have a uniform class distribution. Where $p_j$ is the probability of class $j$. There are three commonly used impurity measures used in binary decision trees: Entropy, Gini index, and Classification Error.